A MatSing antenna (white ball on right hand side of structure) hangs from the light tower at Raymond James Stadium in Tampa, Fla., during Super Bowl LV. Credit: MatSing

And even as the carriers continue to report numbers with parameters that make them hard to analyze, the bottom line from Sunday’s big game — which had 24,835 fans in attendance — was a total of 17.7 terabytes combined for AT&T and Verizon (with no numbers reported by T-Mobile), about half the usage when compared to last year’s game.

Once again, it is impossible to compare apples to apples as Verizon’s reported total of 4G and 5G data used, 7 TB, is from Raymond James Stadium only. AT&T, meanwhile, reported 10.7 TB of 4G and 5G data used, but from an area “in and around the stadium,” with no exact description of how far out “around the stadium” meant.

Still, taken at the highest totals the traffic pales compared to that seen at the most recent Super Bowls, where cellular traffic reported was above 35 TB for AT&T and Verizon last year and somewhere north of 50 TB two years ago, when Sprint (now part of T-Mobile) also reported numbers.

Verizon, which did say that 56 percent of the attendees were Verizon customers (which if you use the official attendance as a starting point gives you 13,907 Verizon customers at the game), gave us a chance to do some bandwidth-per-user math. Our unofficial calculations show Verizon customers using an average of 503 megabytes per user, a fairly solid metric when compared to last year’s Super Bowl Wi-Fi per-user usage total, 595.6 MB per user. (Wi-Fi total usage for Super Bowl LV has not yet been reported.) According to Verizon, its 5G customers saw an average download speed of 817 Mbps, with peak speeds reaching “over 2 Gbps.”

AT&T, meanwhile, claimed that its average 5G customer download speed was 1.261 Gbps with a peak download speed of 1.71 Gbps. However, since AT&T didn’t give us any way to calculate approximately how many customers it had at the game, it’s hard to measure its speeds directly with Verizon’s since there is no way of comparing how many devices AT&T had to support. T-Mobile, which claimed before the game that it had done as much as anyone else to support its customers at the game with 5G services, does not report traffic statistics from big events.

Interesting parking-lot poles and MatSings for the field

As part of the connectivity expansion ahead of the Super Bowl, poles like the one seen here may have 4G LTE, 5G and Wi-Fi for outside-the-venue coverage. Credit: ConcealFab

Though not every pole had every bit of equipment, according to ConcealFab the enclosures “conceal low & mid-band 4G radio equipment and has a pole top shroud that contains omnidirectional 4G and public WiFi signals. 5G radios are mounted with shrouds that have clearWave™ technology that has been tested and approved for mmW frequency.” The company said that some of the poles had lights and security cameras mounted atop them as well.

Inside, the poles were a veritable United Nations of supplier gear. According to ConcealFab, here’s which suppliers brought what to the table:

— Ericsson radios (mmW) inside modular shrouds

— CommScope radios (AWS, PCS, CBRS) in the base and along the pole body

— JMA canister antenna inside the pole top concealment

— Extreme Networks access points and Wi-Fi antenna inside the pole top concealment

— Leotek LED luminaires

— Axis cameras

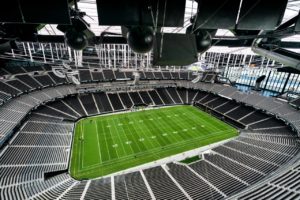

We’d also like to note that MatSing Lens antennas once again played a role in providing cellular coverage, with a couple of the distinctive ball-shaped devices used at Raymond James Stadium to provide cellular coverage to the field. MatSing antennas, which have recently been installed at Allegiant Stadium and AT&T Stadium, have been part of the past four Super Bowls by our account.