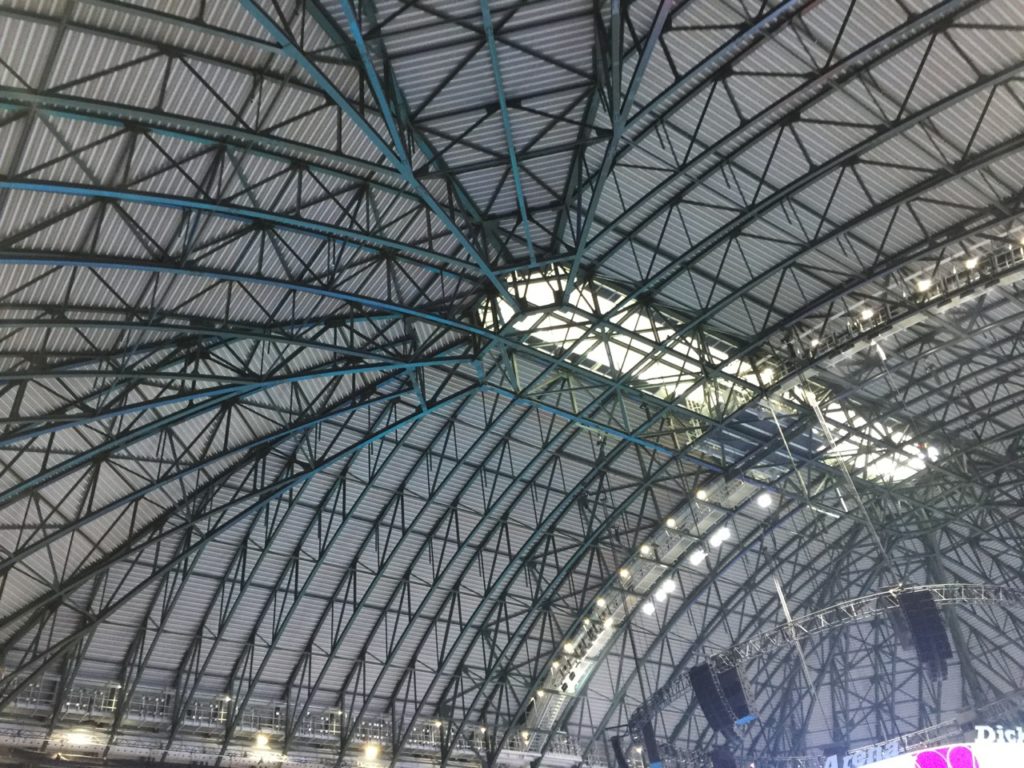

Dickies Arena, now open in Fort Worth, Texas, has a single converged fiber backbone to bring order and efficiency to its networking needs. Credit all photos: Paul Kapustka, MSR (click on any picture for a larger image)

With its soaring roof and its high-end cosmetic finishes, Fort Worth’s new Dickies Arena will be a wonder to look at for fans of all events that will take place there.

But what may be even more impressive, certainly from an IT perspective, is something you can’t see: The single, converged fiber network that supports all network operations, including the cellular DAS, the arena Wi-Fi and the IPTV operations, in an orderly, future-proofed way.

Built by AmpThink for the arena, the network is a departure from what has long been the norm in venue IT deployments, where multiple service providers typically build their own networks, with multiple cabling systems competing for conduit space. At Dickies Arena, AmpThink was able to control the fiber systems to follow a single, specific path, allowing the company to save costs and space for the client while building out a system with enough extra capacity to handle future needs for bandwidth, according to AmpThink.

“This is really our master class [on stadium network design],” said AmpThink president Bill Anderson, during a September MSR visit and tour of the almost-ready arena. If you’re not familiar with the Dickies Arena story, the 14,000-seat arena is part of a public-private venture between the city of Fort Worth and a consortium of investors and donors led by local Fort Worth philanthropist Ed Bass. Though it doesn’t have a professional basketball or hockey tenant, the NBA-sized venue will fill an arena-sized need for events in the growing Fort Worth area, while also serving as the new home for the Fort Worth Stock Show and Rodeo.

Following the lead of AT&T Stadium, where high-end finishes were a hallmark of Dallas Cowboys owner Jerry Jones’ influence, Dickies Arena appears to take cosmetic matters a full step further, with intricate tile flooring and art-quality finishes on areas like stairway handrails and bar facades. During an early September walkaround, as workers completed finishing touches like polishing concrete floors to make the surfaces shine, a technician demonstrated how a bitcoin wallet app integrated into the venue’s payment systems ensures seamless and secure transactions for digital-savvy patrons. MSR also got to see the results of owners’ requests of “not having a single cellular or Wi-Fi antenna visible,” according to AmpThink’s Anderson.

No fiber allowed outside of the single path

Editor’s note: This report is from our latest STADIUM TECH REPORT, an in-depth look at successful deployments of stadium technology. Included with this report is a profile of the new Wi-Fi 6 network at Ohio Stadium, and an in-person research report on the new Wi-Fi network at Las Vegas Ballpark. You can either VIEW THE REPORT LIVE (no registration needed) or DOWNLOAD YOUR FREE COPY now!

In the suite and concourse areas, for example, Wi-Fi APs and DAS antennas are hidden behind ceiling panels, with no electronics in sight. But what’s even more impressive from an engineering and construction standpoint is what’s happening further down the network path from the endpoints, where all cable and fiber follows a structured pathway, first to an IDF and then back to the head end rooms in the arena’s basement.

“No fiber is allowed to follow a path that doesn’t tie to an IDF, or directly to the head end,” said Anderson. “And we didn’t allow DAS vendors to be outside the closet. It’s the venue’s fiber network. Nobody else could come in and build their own.”

Looking from the end of the project back, it’s clear why you might want to pursue such a path: With a single, converged network, design and planning and eventually operations are streamlined, since there aren’t multiple infrastructures to deploy and maintain. The conditions also allowed AmpThink to fully pre-design and perform many construction techniques like splicing and cable measurement and cutting beforehand – according to Anderson, there was not a single fiber termination done in the field.

“For venues it used to be, use the ‘brute force’ method and just go figure it out in the field,” Anderson said.

At Dickies Arena, that method simply wasn’t the case. In addition to fiber cabling and splicing work, AmpThink also built many custom enclosures (the company has a large machine shop at its Dallas-area headquarters where it can design and manufacture parts like metal wiring boxes and the plastic enclosures it uses for stadium Wi-Fi and DAS deployments) to simplify installation while complying with the strict aesthetic requirements.

“AmpThink helped us think proactively so we are prepared to build on this solid foundation for the future,” said Matt Homan, president and general manager of Trail Drive Management Corp (TDMC), the not-for-profit operating entity for Dickies Arena. “This has allowed us to have a much more cost-effective approach, which is important for us as a 501c3 organization operating Dickies Arena. The AmpThink team has done a phenomenal job of assisting with the architectural integrity of the building to ensure that no Wi-Fi or DAS antennas were seen.”

Jeff Alexander, senior vice president at ExteNet Systems, said Dickies Arena was the first time ExteNet ever participated in a converged network design for a large public venue. But Alexander also said ExteNet, which is responsible for the DAS design and 5G cellular installations at Dickies Arena, had years of experience in situations where service providers had to work together.

“Most [other] DAS deployments give no consideration for Wi-Fi, or anything else,” said Alexander in a phone interview. “Given ExteNet’s experience and our track record, these are things we were forced to think about 10 years ago.”

According to Alexander, the directive to work with a single converged fiber network wasn’t “harder” than a regular installation.

“It was unique,” Alexander said of the Dickies Arena installation experience. “It made us think of things we hadn’t thought about, and challenged us to consider other things than the typical DAS installation, which isn’t a bad thing. I consider it a success.”

At Dickies Arena, the DAS uses the Corning ONE DAS hardware system with approximately 500 active antennas in 12 zones for the DAS.

As future-proofed as possible

As part of the overall fiber network design, AmpThink’s Anderson said the company maximized capacity throughout the building, with hundreds of extra fiber strands available to support future capacity needs. By using optical fiber with hundreds of strands wound together – including some stretches with 864 different fiber strands inside a single cable – AmpThink actually saved time, money and space by preventing the need for additional infrastructure or future cable pulls.

“The bulk of the cost [of fiber deployments] is the labor to pull the fiber,” Anderson said. By using large-bundle fiber, Anderson said AmpThink was able to drive the cost per strand to “a very low number,” while also clearing conduit space since a large-bundle fiber strand saves a huge amount of space when compared to multiple smaller-bundle strands which must each have their own insulation.

While ExteNet’s Alexander contends that no network design can ever be truly “future-proofed” – if you ask him he will tell you a story about a large sports venue where ExteNet is currently replacing 864-strand fiber put in 5 years ago with 1,728-strand fiber – he does agree that putting in as much fiber as the design and cost allows buys a venue time to support the always-growing demand for bandwidth.

“The industry is full of venues that didn’t do that, and 12 months later they’re expanding their fiber plant,” Alexander said. AmpThink’s Anderson noted that even during the arena’s construction, there were demands for additional fiber – such as for a densification in the LED ribbon boards – that were easily addressed.

“People came back to us, and said they needed more fiber, and we had it to give to them, no problem,” Anderson said. “It didn’t cost us a lot to do it [add in more fiber strands]. It’s a model everyone should look at.”

Want to read more in-depth reports from our latest issue? VIEW THE REPORT LIVE (no registration needed).

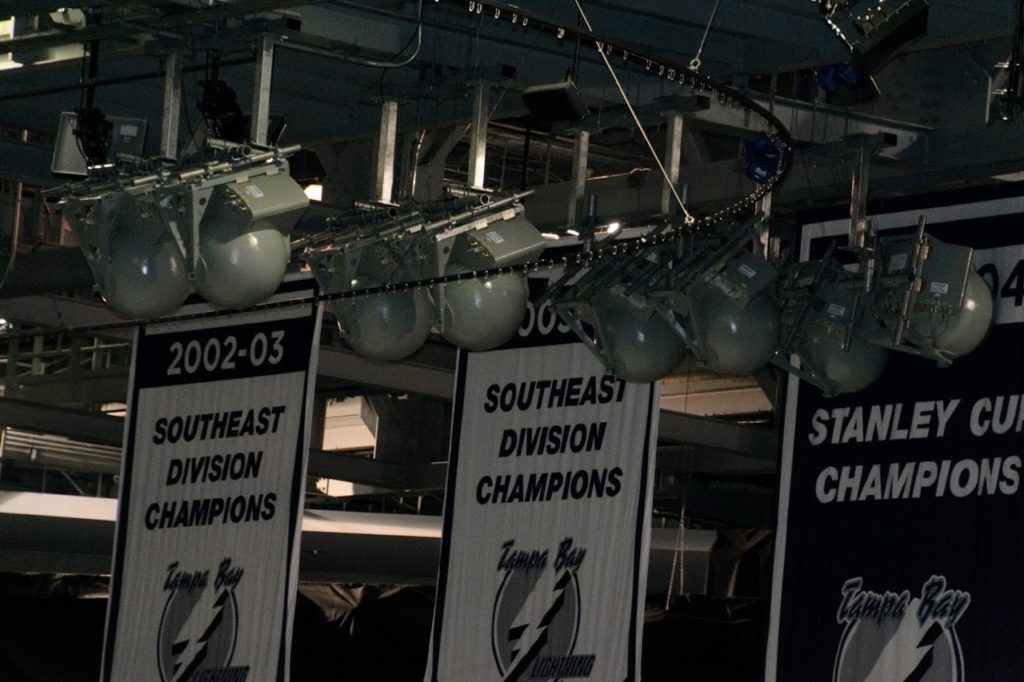

The rodeo will be the main event at Dickies Arena every year

The soaring, open rooftop is meant to mimic the wide open skies of Texas

The AmpThink-designed and manufactured cabling cabinets helped complete the ‘master class’ installation