On the press bus to the stadium for the semifinals. Credit all photos: Paul Kapustka, MSR (click on any picture for a larger image)

(If you need to catch up, here is part 1 of this missive)

Sunday, April 7: Geeking out on Wi-Fi 6

If Saturday had been all about walking around, my Final Four Sunday was all about staying in. But the day of relative inaction on the basketball court played right into my strategy for the weekend, which was: Find a way to maximize my four days in Minneapolis to get the most work done possible.

Sunday, that meant I was all in with the AmpThink team, basically on two levels. One, I wanted to get a real in-depth look at the temporary Wi-Fi network the company had installed at U.S. Bank Stadium to cover the seats that weren’t part of the stadium’s usual football configuration. For the Final Four, that mean extra seats along the courtside “sidelines” that actually were erected over the lower-bowl football seats and then extended out to the edge of the hardwood floor, as well as all the temporary seats in each end zone that stretched the same way out to the basketball court.

After a “team breakfast” at a great breakfast-diner kind of place the AmpThink team and I got inside the arena in a break between practices (you are not allowed near the court when practices are going on) and I got an up-close look at how AmpThink stretched the network from the football configuration out to the temporary Final Four floor. Though AmpThink covered most of the bowl seating at U.S. Bank Stadium with innovative railing-mounted antenna enclosures (which Verizon copied when it added DAS capacity ahead of Super Bowl 52, which was held in the stadium the year before), for the temporary seating AmpThink went with an under-seat design, with AP boxes located under the folding chairs and switches located underneath the risers.The temporary network, as it turned out, worked very well, but the funniest story to come out of the deployment was one of theft — after Saturday’s games the network analysis showed one of the APs offline. Further exploration by the AmpThink team found that the AP itself was no longer around — some net-head fan had apparently discovered that the under-seat enclosures were not secured, and for some reason thought that a Cisco Wi-Fi AP would make for a fine Final Four gift to take home. My guess is that future temporary networks might see some zip-ties used to lock things down.

After a cool tour underneath the temporary stands to see how AmpThink wired things, we spent the better part of the afternoon hanging out and talking about Wi-Fi 6, a topic the AmpThink brain trust was well wired on. Eventually that day of brainstorming, interviewing and collaboration led to the joint AmpThink/MSR Wi-Fi 6 Research Report, which of course you may download for free.

It was the best use possible I could think of for the “day off” Sunday, where if you are involved with the Final Four you are basically waiting around until Monday night. And since the AmpThink team is rarely ever in one place together for a full day — later that year, for example, AmpThink would be busy deploying new networks at Ohio State, Oklahoma, Arkansas and Dickies Arena — it was an extremely cool opportunity to be able to spend time tapping the knowledge of AmpThink president Bill Anderson and his top lieutenants.

Still feeling the physical effects of my Saturday — and knowing Monday would be even more taxing — I headed back to the hotel in the late afternoon, catching the end of the women’s Final Four at the second of the two local brewpubs next to the Marriott. Though the championship game wouldn’t take place until Monday evening, I had an early start ahead to a long day of again, maximizing those stories.

Monday, April 8: Allianz Field, the Mall of America, and the championship game

Every quarter, Mobile Sports Report tries to find a good mix of profiles to educate its readership. Typically we try to keep the profiles in season, for relevance and timing. But other times, you just go get a good story because it’s interesting. Or, if you can, you do multiple stories on one plane ticket, something that speaks to the bottom line of being an entrepreneurial startup that has to keep an eye on the budget.

So while other “media” at the Final Four may have been taking late breakfasts or hitting the gym Monday morning, I was in an Uber out to Allianz Field, the new home of the MLS Minnesota United. Though it wasn’t scheduled to open until later in April, the folks behind the networking technology — a local company called Atomic Data — had agreed to give MSR a look-around at the Wi-Fi deployment, a great opportunity we couldn’t pass up.

Yagya Mahadevan, enterprise project manager for Atomic Data and sort of the live-in maestro for the network at Allianz Field, met us at the entry gate and gave us the full stadium walk-around, which was great to have, bad hip issues be damned. I really liked the tour and being able to write the story about how Atomic Data got its feet in the door at a major professional venue, and hope the company can do the same for other venues in the future. I’m also hoping to get back to Allianz Field for a live game when such things start happening again, because the place just looks sharp and I am kind of all in on the way MLS teams are tapping into the fan experience without charging hundreds of dollars a seat like some other pro leagues in the U.S.After an hour or so of touring Allianz Field it was back in another Uber to the Mall of America, where I had scheduled an interview with Janette Smrcka, then the information technology director for the Mall. (Janette is now part of the technology team at SoFi Stadium, and we hope to have more talks with her soon!) Janette, who I had gotten to know while reporting on the Wi-Fi deployment at the Mall of America, had told me about a cool new project involving wayfinding directories at the Mall, a story which fit perfectly with the new Venue Display Report series we were launching last year.

After sitting down with Janette to get the specifics on the display gear I went into the Mall itself and wandered around for a while (OK, I also did stop to get a chocolate shake at the Shake Shack) watching people use the directories. My unscientific survey showed that people used them quite a bit, with all the design elements Janette and her team coming into play, like deducing that people would be more willing to use smaller-sized displays since they could shield them with their bodies, making the interaction more private. Little things do matter in technology, and it’s not always the technology that matters.

In the mall you couldn’t forget what was going on that weekend — as if the fans wandering around in their school gear would let you. I jumped back on the light rail to get back to the hotel and had my media-celebrity moment heading up to my room, when John Feinstein himself held the door to the elevator so I could get there in time.

Wi-Fi, hoops and a brat and a beer

As soon as I got to the stadium on the press bus I skipped the whole press working-room thing and headed up to the football press box to secure a spot. Turns out I didn’t need to worry as most of the media still either wanted to be closer to the court or closer to the workroom to get their stories done on deadline. Fine for all us. By now I had completely learned all the elevator and escalator pathways I needed to know to get around the stadium in record time. I took Wi-Fi speedtests, I took DAS speedtests, I watched the crowd get into the excitement of being at the “big game.”

For sure, part of the fun of attending bucket-list events these days is tied to the mobile device. A big part of the fun. I watched many, many people take pictures of themselves and their companions, take pictures or videos of the action on the court, or just (in some cases) walk around with their phones on video broadcast, relaying the live scene to an audience of who knows who. To me that’s one of the main points of these networks our industry sets up and runs — enabling those who are lucky enough to be there live to be able to share that experience, somewhat instantly, with those closest to them (or their imagined wider audiences).Though these stadium visits can sometimes be lonely and somewhat strange — I mean, who’s there to cheer for the Wi-Fi? — at the Final Four I considered myself part of the general audience, a witness to the fun and excitement of “being there.” And by halftime I had already done all the “work” I needed to do — the Wi-Fi was strong, as was the DAS — so I camped out in the press box and waited for the second half to begin, so I could go out and get the bratwurst and beer I felt I’d earned.

It took a little bit of walking around to find the stands I wanted to hit — I wanted a beverage that was local, not national, and a brat done right — and I found both somewhat fortunately close to the press box. I took my bounty to a stand-up counter space located just off the main upper concourse and for the time of my meal I was just another hoops fan, enjoying the close contest between Virginia and Texas Tech. Then it was back to the press box and more just-fan watching, an exciting finish and then trying to capture the perfect “confetti burst” photo for the cover of our upcoming issue.

After goodbyes to David and his crew and the AmpThink team, since I didn’t have any stories to write I was on the first press bus back to the hotel, where I quickly crashed ahead of my flight back home Tuesday morning. It was a long weekend in Minneapolis and my hip hurt, but I had done what I needed to do, notebook full of stories that I could write while I recovered from the upcoming surgery.

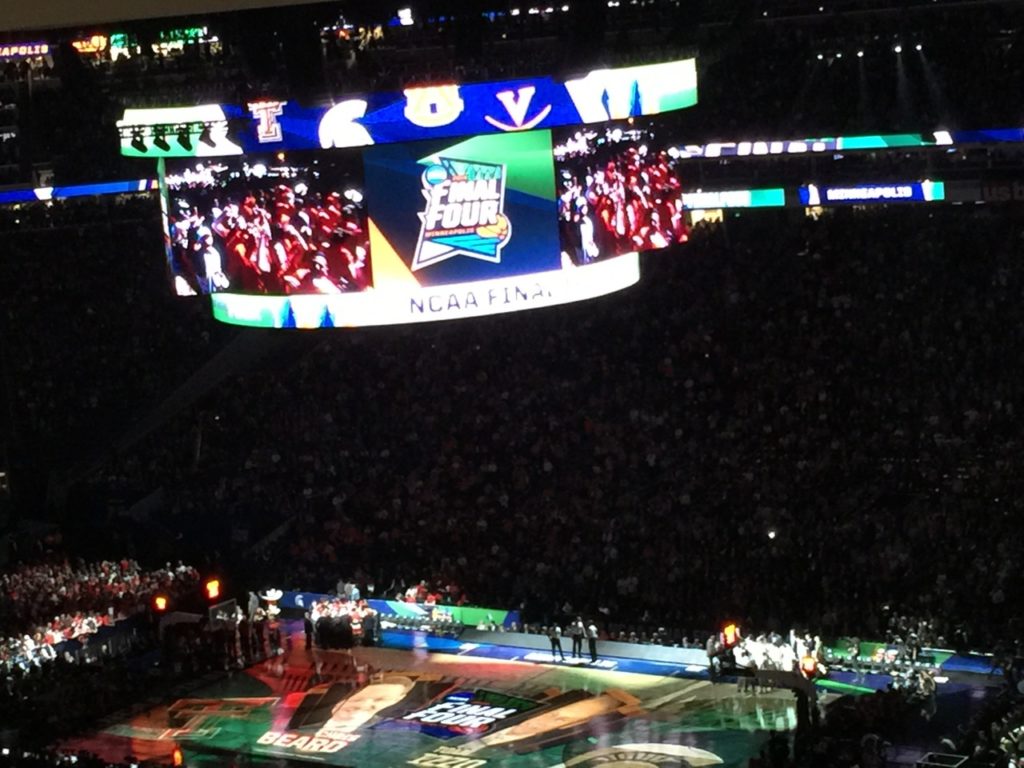

It’s hard to take a photo showing how a Final Four feels in a football stadium, but this isn’t bad

Showtime for the championship game

Any questions that Minneapolis knows how to do brats right?

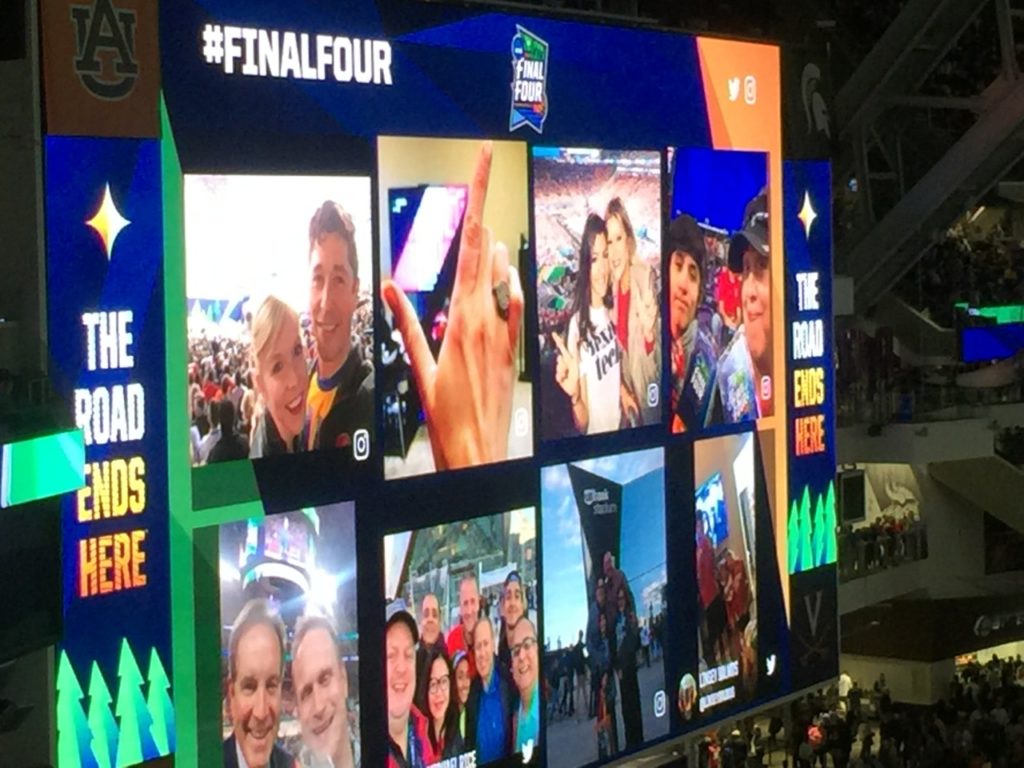

The big football displays couldn’t be used while game action was in play, but during timeouts they were on, sometimes showing cool social media posts

The well-deserved Final Four MSR approved dinner